I Fell In Love With Vibe Coding. Then I Tried To Ship It

The first time I vibe coded, it felt like cheating. In the best way. A proof of concept up in minutes? What? I remember thinking: is this the future?

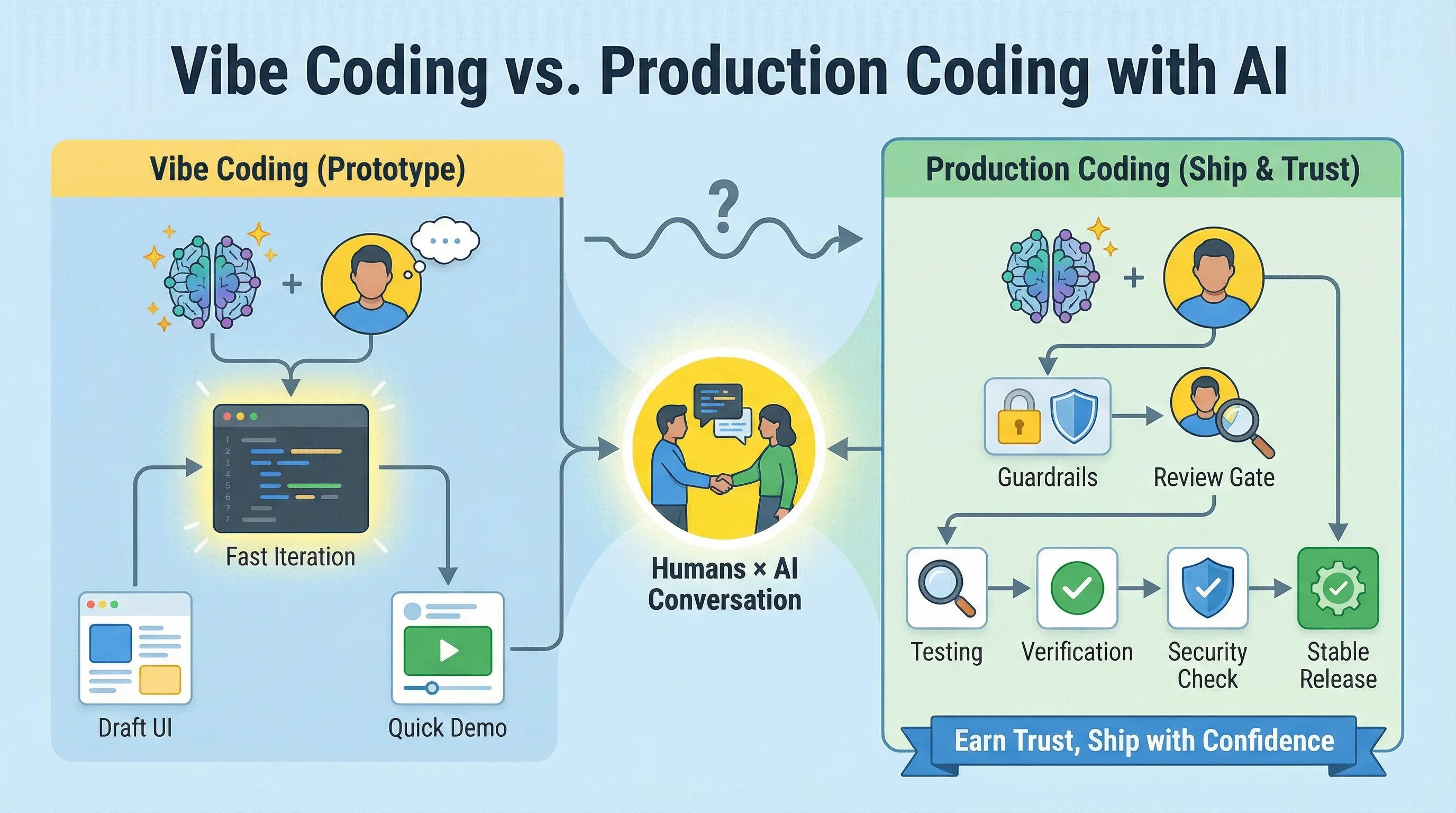

And honestly, that part still applies. For prototypes, demos, and experiments, vibe coding is pure momentum. It compresses hours - sometimes days - into a single burst of “let’s see if this idea even works.”

But then I did what everyone does after the magic trick works once: I tried to use the same approach on production code. And I learned very quickly that production doesn’t reward vibes. It rewards correctness, maintainability and repeatable execution.

1. Vibe coding is incredible (when the stakes are low)

When I say “vibe coding,” I mean building by prompting: describing what you want, watching the assistant generate code, and iterating until the outcome looks right - often without obsessing over every line.

For low‑stakes work, that mode is perfect:

- You can test a product idea without a week of scaffolding.

- You can explore UI concepts without committing to a design system decision.

- You can spike an integration to see what breaks before you invest.

The point isn’t perfection. It’s speed to feedback.

2. Production doesn’t run on vibes

Production code lives inside a real ecosystem: existing patterns, edge cases, performance budgets, security requirements, accessibility, deployment pipelines, observability, analytics. The team has to live with their decisions long after the demo.

This is where vibe coding starts to wobble. “Works on my machine” isn’t the bar. The bar is: it works in your codebase, with your conventions, under load and it stays understandable when the next person touches it. And it works in production.

There’s also an uncomfortable truth: AI assistance can feel faster even when it isn’t. GitHub’s controlled study on Copilot showed big speed gains on a defined task. But METR ran an RCT with experienced open‑source developers working on their own repos and found the opposite on average: using early‑2025 AI tools made them slower, even though the developers believed it sped them up.

Different setting, different result. That’s the point. If you treat AI like a universal speed button, production will humble you.

3. What fixed it for me: a constant conversation with our agents

The breakthrough wasn’t “better prompting.” It was changing the relationship. I stopped treating AI like a slot machine (prompt in, code out) and started treating it like a teammate. A peer I stay in conversation with.

The loop looks like this:

Propose, challenge, verify, repeat.

- The agent proposes an approach (and ideally a plan if you have someone like Themistocles, our planning agent).

- I challenge the agent: “Why this pattern?” “What’s the risk?” “What will this break?”

- The agent verifies: adjusts the diff, adds tests, checks assumptions, updates the plan.

- We repeat until the change is boring in the best way.

That’s Humans × AI in practice. It's not “AI on autopilot”. It is a continuous back‑and‑forth where a human is always accountable for architecture, taste, style and results.

4. “Well‑trained agents” beats “one big prompt”

Production work isn’t all about writing code. We need a specific intent, we have to stick to our best practices, have unit and E2E tests. Human testing so we know the feature works as we expect.

That’s why we moved toward role‑based agents with specific responsibilities. And we gave them names and personalities too:

- Castor: backend

- Pollux: frontend

- Themistocles: planning

- Anyte: marketing

And yes, we love ancient Greece mythology.

Our goal isn’t to replace engineers: we want to give engineers a way leverage their skills and power-up their output.

Agents also help with the less glamorous parts of shipping:

- Keeping changes consistent with existing patterns

- Catching edge cases early (before our users do)

- Reducing review burden by making diffs smaller and more testable

- Avoiding “confidently wrong” code

Security is a great example of why humans can’t leave the loop. Research has shown AI assistants can generate insecure code in a meaningful fraction of scenarios. “It compiles” is not the same as “it’s safe.”

5. A production workflow that actually holds up

If you want AI to help you ship code that works rather than impress you in a demo, this is the workflow I recommend:

- Start with acceptance criteria (definition of done). What can’t break? What’s the success metric? What’s the rollback plan?

- Make your agent show its work. Ask for a plan, risks, and alternatives before you ask for a diff.

- Keep diffs small. Smaller changes are easier to review, test, and reason about - just like you would when you review code from a human.

- Verification is non‑negotiable. Tests, linting, types, performance checks, security posture. Whatever “production quality” means for you.

- Review like it matters. Challenge assumptions. Ask “what did you ignore?” Demand evidence.

- Ship, observe, iterate. Production is a feedback loop: treat it like one.

Vibe coding still has a place in its own world. It’s just not the whole world.

Conclusion

I love vibe coding. It’s one of the fastest ways I’ve ever found to explore an idea and prove value fast.

It's just that... production isn’t the place for vibes. Production is where you earn trust. Your team's trust. A client's trust. If you want AI to accelerate delivery without shipping chaos, the answer isn’t “more prompting.” You'll need trained agents, guardrails and a constant human conversation: Humans × AI, end‑to‑end, before you ship.

If you want a second set of eyes on your current AI dev workflow, I’m happy to compare notes!